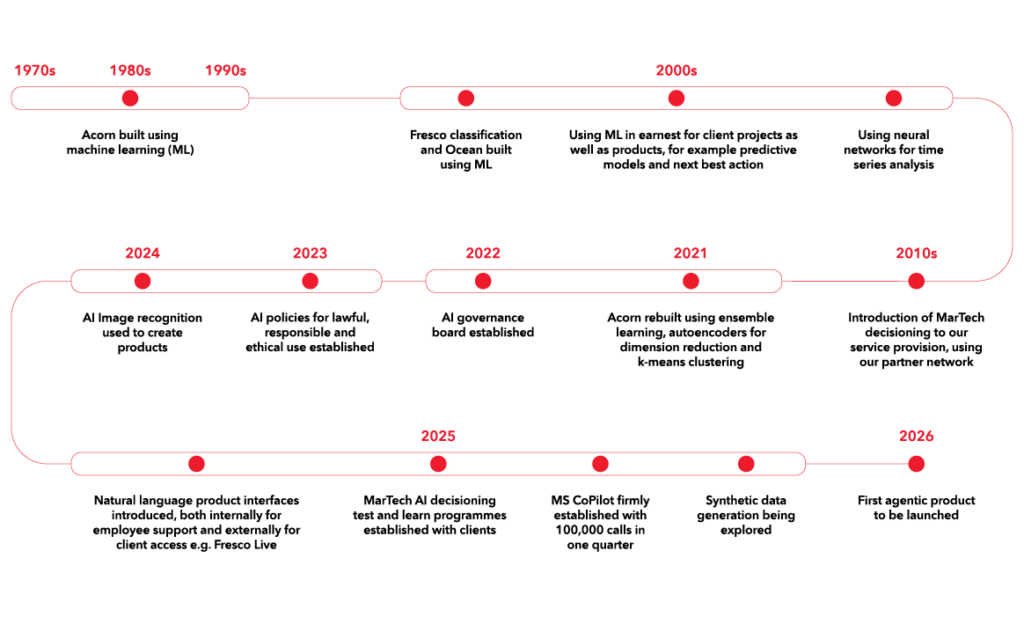

Artificial Intelligence (AI) has been part of CACI’s story for years. It has been driving innovation, improving experiences and shaping the way we work since the 1970s and the advent of machine learning. Progress, however, means nothing without responsibility. That’s why we’ve embed ethical principles into every AI decision we make. This ensures transparency, fairness and trust. Our commitment is simple: to use AI in ways that empower our people, clients and the world around us.

What is AI to us?

At CACI, AI isn’t just a technology. It’s a responsibility. For us, AI means creating smarter solutions that empower people, enhancing decision-making and delivering meaningful outcomes for clients. We see AI as a tool to unlock innovation, but always within a framework of trust, transparency and ethics. Every advancement we make is guided by principles that prioritise fairness, accountability and lawful use, ensuring that progress never comes at the expense of integrity. AI is how we shape the future: responsibly with purpose.

Learn more about our work with AI through our case studies or related resources.

Our four categories of AI

Machine learning

Since CACI’s inception, we have used machine learning in algorithm building and insight generation. In the more recent development of AI, deep learning techniques have emerged which allow us to work with unstructured data as well as structured data, and at a far greater scale.

Read more about Machine learning

Generative AI and LLMs

CACI uses generative AI & LLMs for summarisation and creation, retrieval and knowledge access, ideation and strategy, personalisation and customer experience and automation and efficiency. Where appropriate, we are building natural language interfaces with our client documentation and guidelines. We are training teams to interpret outputs sensibly, both for our own work and in support of our clients.

Martech tool applications

CACI partners with several platform providers which increasingly use AI decisioning and content generation as part of their omni channel execution. We ensure there is a human in the loop at the appropriate times, testing the boundaries for when these tools will surpass human capability and when humans should provide a more nuanced view.

Read more about Martech tool applications

Agentic AI

Combining the Model Context Protocol and our in-house engineering skills, we are increasingly adopting ways of giving clients access to our products and tools without needing technical or analytical skills to access them via complex interfaces. Our approach is to allow users to ask natural language questions, then have an AI agent identify the appropriate tool.

Our history with AI

Four AI categories

Find out more on each of our AI categories.

‘Traditional’ and ‘less traditional’ machine learning techniques

There are many techniques that fall under the AI banner, many of which have been used for quite some time now. Firstly, there is dimensionality reduction. This makes data easier for a model to consume (at CACI we use LDA, t-SNE and PCA). Next are classification and regression. These are where we use previous knowledge to predict what is most likely to happen in future, also known as supervised learning (at CACI we use Ridge/Lasso Regression, Linear Regression, Decision Trees, Naïve Bayes, K-NN and SVM). Finally, we have clustering and pattern search. Here we are looking for data groups that display common characteristics. This is also known as unsupervised learning (at CACI we use Fuzzy C-Means and K Means).

These are all individual techniques which can be combined and complemented with approaches that maximise their strength, such as stacking, bagging and boosting.

The use cases these are applied to are where we have structured numerical data, hence us referring to them as traditional machine learning, the data being the traditional part. The outputs can include predicting churn, cross sell, up sell, segmentation, pricing, value modelling, spatial interaction modelling and forecasting. Additionally, time series analysis can be used for system monitoring to identify out-of-pattern behaviours, for example spikes or dips in system availability and unexpected behaviour.

More recently deep learning techniques have emerged which remove the need to manually structure the data. These techniques learn more complex patterns from larger, more diverse and unstructured datasets like images, audio and text. The techniques we use here are convolutional neural networks for spatial relationships (for example pixels in an image) and generative models. These learn the data in a way which allows them to create new synthetic data points that resemble the original data. Some techniques we use here are GPT, autoencoders, transformers, recurrent neural networks (RNNs) and generative adversarial networks (GANs).

The use cases these are applied to are where we have high volume text and image analysis (big data). For example, we have used convolutional neural networks to analyse satellite imagery to identify wealthy geographic areas and autoencoders to support the development of discrete segments. We created 65 segments from a possible 8.8 trillion combinations of data points for Acorn. We also have several examples where we have created classifications through summarising free form text, such as call text and customer satisfaction feedback forms.

Related resources

Case study unsupervised learning – Maps

Case study unsupervised learning – Acorn

Case study supervised learning – Ocean

Case study supervised learning – Nationwide

Case study supervised learning – Satellite imagery

We partner with several platform providers to support our clients in their execution of omni- channel personalised digital marketing. This includes any channel where the identity of the user is known to the client.

In doing so, we are constantly testing AI driven functionality within those platforms to identify where they are best used as a standalone function or in combination with human input. The challenge all clients have is where to draw the divide between human and technology. We guide clients through this process, considering factors such as data availability, existing tech stack and marketing objectives.

AI decisioning (AID) has become a key area of focus. As advanced data techniques help us learn more about what consumers want, we can combine that learning with the most efficient and intelligent means of campaign orchestration.

This helps improve efficiency whilst keeping an eye on the customer experience, with humans checking a meaningful proportion of the outputs. Human oversight remains essential to ensure guardrails are in place and to ensure results are continually measured and optimised for targeting, message content and product/message combinations.

Some of the partners we work with include Braze, Bloomreach and Adobe.

Related resources

Case study – RAC

Case study – easyJet

Solution – Martech Implementation

Solution – Execution Implementation

Solution – Customer Experience

News – Award win for service transformation

Sometimes it can be a challenge to work out how to put this new ability to speak to a machine using existing foundation models to good use. So far, we have categorised it into five broad groups, which can be applied in pretty much any business context. Our job is to determine what it is we want to achieve and choose the right approach for doing so. Below is a table with those categories and some examples of how we are using them to either help ourselves get better at working with our clients, or directly in support of the client need.

Summarisation and creation – where you have a lot of existing text and want to create a different version of it

Examples: Creating proposals, policies, guidance and FAQs from complex documentation, meeting notes, action generation and project plans

Retrieval and knowledge access – where you want to give easy access to data or information to a set of people without the skills to data mine or read vast quantities of data

Examples: Chat bots allowing natural language interfaces with existing materials (both customer facing and internal) (for example Fresco Live, PinRoutes)

Ideation and strategy – where you have a blank sheet of paper and want a prompt to get you started

Examples: Strategic planning, job roles and understanding new processes

Personalisation and CX– where you combine customer understanding and content assets to give the customer the best experience of your organisation in your tone of voice

Examples: Generating content that can be repurposed depending on different data prompts, triggers or translation requirements (e.g. easyJet)

Automation and efficiency – where you are removing rework or enhancing existing development skills

Examples: Tools like Github CoPilot, whilst a specialist and valuable tool for shared learning and efficient code use is still an LLM at its core

We are of course cognisant that LLMs are not perfect, they do hallucinate. We have a two-pronged approach to handling that:

a) training and guidelines for individuals in how to prompt and then how to interpret, challenge and critically evaluate the output and;

b) technical guardrails (be that within our partner tools or within our own products) to minimise the risks in the first place.

Separately, and aligned to our machine learning AI pillar, we support clients with text interpretation, text parsing and clustering of text patterns. For example, summarising research data, undertaking topic detection, sentiment analysis or text quality assessment.

Related resources:

Case study – easyJet

Case study – PinRoutes

Webinar – Fresco Live

Blog – Utilising the latest LLMs

Agentic AI combines many of our other AI skills into one seamless experience and comes with a new set of opportunities and watchouts.

The system we are in the process of building is known as the Intelligence Platform (IP). The purpose of the system is to allow users to access pre-existing tools and data products using natural language in the form of a large language model (LLM) without necessarily being aware of which tool will be used to respond to their question.

The rationale for creating the platform is to allow greater democratisation of data and data analysis tools without the user needing to have a deep understanding of how to use the analytical tools.

The AI agent uses artificial intelligence to understand the user’s natural language input and to determine which tool is the correct one for the task and serves up the answer in the most appropriate manner to the user. It allows the user to pose the initial question (prompt) and then interact with the analytical answer, refining their questions to develop further insights.

This then allows the user to draw conclusions and make decisions that will be applied in the real world. All input prompts are via text or csv file, for example store lists, postcodes lists but not personal data. Multi-modal outputs are included, specifically charts, maps and text.

We use the model context protocol, which fundamentally means we have:

a) Analytical tools that undertake analytical jobs and are given a text description so that the AI agent can understand what it does, and what its purpose is

b) A data layer that feeds those tools to do the relevant analysis using the relevant data

c) An AI agent which translates a human’s question into a decision as to which tool to use, the data that is required for that tool and then the translation of the answer back to the human

There can be multiple tools and multiple agents all talking to each other using lots of different combinations of data at any one time to fulfil a question. We are in the process of building this, ensuring all the relevant guardrails and prompt injection safety mechanisms are in place.

A lot of the development time is spent on ensuring we are AI data ready, with a focus on structures, meta data and enhanced security alongside the standard data hygiene of accuracy, cleanliness, adequacy, relevance and recency.

The current focus is to build an addition to our geographic spatial data software. We expect to launch our first agentic product in the summer of 2026.

Staying innovative

Innovation drives progress – we don’t just react to change, we create it.

Speak to one of our data and AI experts

We’re ready to help you cut the complexity out of data and AI.